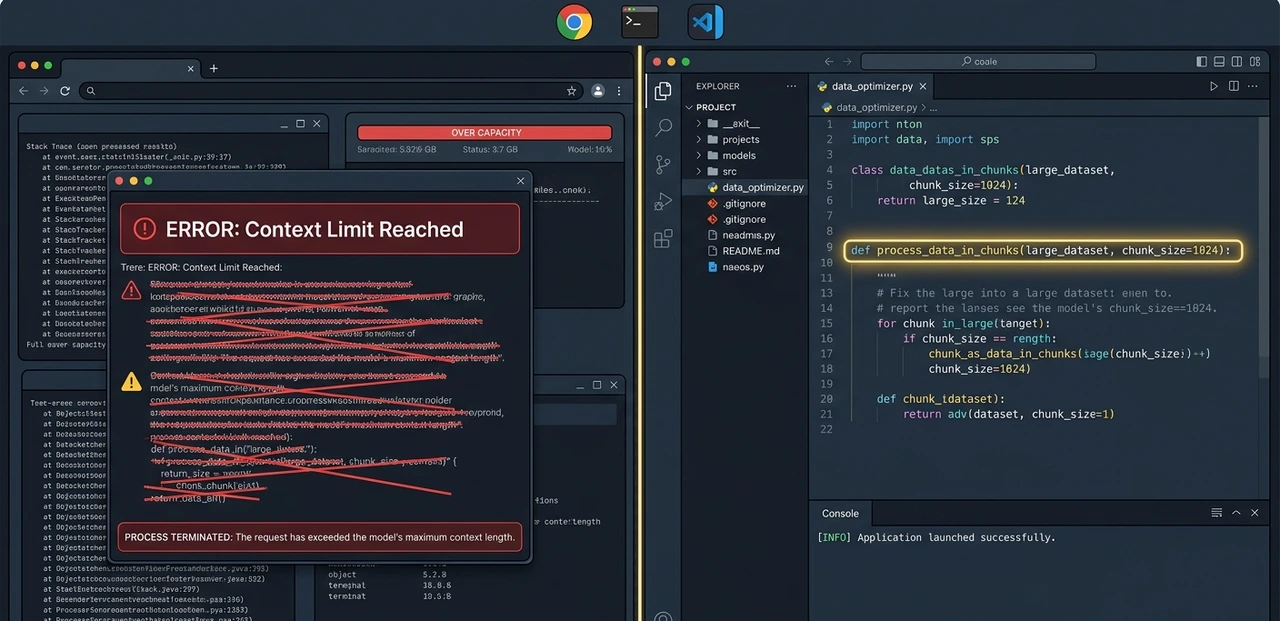

Three months ago, a 4,000-line Python file broke a client's staging server at 11pm. The kind of bug where the error message is technically accurate but completely useless. Pasted the whole file into GPT-4o — it hit the context ceiling and gave me a truncated analysis. Switched to Claude Sonnet 4.6. Got a full diagnosis with the conflicting import flagged by line number. That was the moment the Claude vs GPT debate stopped being theoretical for me.

Both tools are genuinely good. But they're not good at the same things, and nobody should be choosing between them based on benchmark tables alone. Here's what six months of real production use across coding, writing, and agent workflows actually taught me.

What the Coding Benchmarks Actually Tell You (And What They Don't)

Claude Sonnet 4.6 scores 79.6% on SWE-bench Verified, the closest thing developers have to a standardized real-world coding test. GPT-4o lands in the 48–54% range depending on the task type. That gap is large enough to mean something.

But here's the part comparison sites skip: benchmark conditions rarely match your actual workflow. SWE-bench tests single-file repairs with clean problem statements. Production code is messier with multiple interdependent files, legacy patterns, undocumented business logic.

Atlas Whoff's 30-day agent study (dev.to, April 2026) ran both models on identical multi-step tasks. Claude passed 87% of tests requiring three or more interdependent files; GPT-4o hit 71%. The gap widened specifically when existing codebase context was involved.ChatGPT vs Gemini Claude read it. GPT-4o often regenerated logic that already existed elsewhere in the project silently creating duplicates that caused failures hours later.

That 2-hour silent failure? It happened to me too. GPT-4o rewrote a utility function I'd already defined in a shared module. No warning, no conflict message. Just a subtle bug that took an embarrassingly long time to find.

"Benchmark scores tell you who can code. Real files tell you who can code without breaking what's already there."

HEAD-TO-HEAD: CLAUDE SONNET 4.6 VS GPT-4O

Category | Claude Sonnet 4.6 | GPT-4o | Winner |

Coding (SWE-bench Verified) | 79.6% | ~48–54% | Claude ✓ |

Context Window | 1,000,000 tokens | 128,000 tokens | Claude ✓ |

Input Pricing (per 1M) | $3.00 | $2.50 | GPT-4o ✓ |

Output Pricing (per 1M) | $15.00 | $10.00 | GPT-4o ✓ |

Multi-step code tasks | 87% pass rate (dev.to, Apr 2026) | 71% pass rate | Claude ✓ |

JSON / structured data | 78% accuracy | 91% accuracy | GPT-4o ✓ |

Long-context instruction follow | Stable past 150K tokens | Degrades past ~100K | Claude ✓ |

Writing quality (blind eval) | 47% preferred (Q1 2026) | ~29% preferred | Claude ✓ |

Image generation | Not supported | Supported (DALL-E) | GPT-4o ✓ |

Single-file / quick scripts | Good | Faster, slightly better | GPT-4o ✓ |

The Context Window Difference Is Real But Only for Certain Work

Claude Sonnet 4.6 supports up to 1,000,000 tokens in a single context pass. GPT-4o maxes out at 128,000. That sounds like a marketing copy until you're staring at a 90,000-token codebase and need the model to hold the whole thing in view simultaneously.

Matthew Turley, a fractional CTO writing on UX Continuum in March 2026, put it plainly after two years of shipping production features on both APIs: Claude handles 500+ line files without losing track; GPT-4o and even the newer GPT-5 models tend to truncate or hallucinate mid-file when edits get complex.

The dev.to agent study confirmed this too. Claude maintained instruction-following at 150K+ tokens. GPT-4o started ignoring system prompt instructions past around 100K responses drifted toward generic output. Best AI Tool Review 2026For autonomous agents where context accumulates across a session, that's not a minor quirk. It's an operational risk.

That said, 95% of everyday tasks won't push either model anywhere near its limit. If you're writing a single function, drafting a PR description, or debugging a 200-line script, the context window difference is irrelevant. Don't let it be the deciding factor if your actual work doesn't need it.

"A million-token window only matters if your work genuinely fills it. Know your file sizes before you pay for capacity you won't touch."

Where GPT-4o Actually Wins: Structured Data, Speed, and Price

GPT-4o isn't losing this comparison it just wins different categories. And those categories matter for specific workflows.

On JSON extraction from messy HTML and unstructured text, the dev.to data showed GPT-4o at 91% accuracy vs Claude's 78%. Claude has a tendency to prefix JSON output with reasoning prose, which breaks naive parsers unless you explicitly prompt it not to. GPT-4o produces a tighter, more predictable structure by default.

For quick scripts 'write me a Python function that does X' — GPT-4o is faster. The latency difference is noticeable in rapid iteration loops. When you're testing five variations of a prompt in a row, that speed adds up.

Price is the other honest win. GPT-4o charges $2.50/M input and $10/M output, compared to Claude's $3.00/M and $15/M. For high-volume pipelines processing millions of tokens daily, that spreads compounds fast. Claude's prompt caching helps repeated context can drop effective input cost by up to 90% — but you have to architect for it deliberately.

And if image generation is part of your product, GPT-4o is simply the only choice of the two. Claude doesn't support it. No workaround, no alternative. That's a hard stop.

"GPT-4o is faster, cheaper on base pricing, and better at structured JSON output. For high-volume pipelines, those advantages are real money."

Writing Quality: The Gap Nobody Talks About Enough

In Q1 2026 blind human evaluations run by independent research groups (reported by AI Magicx, April 2026), Claude-generated content was preferred 47% of the time versus roughly 29% for GPT-5.4. GPT-4o's margin would be wider still in Claude's favor.

The UX Continuum analysis found the same thing: GPT models have a recognizable cadence. The transition words, the 'certainly,' the five-paragraph reflex. Claude produces prose that reads like a person wrote it, especially over long outputs.

What surprised me most was the instruction-following gap. Tell Claude 'write in first person, casual tone, no bullet points' it does exactly that through 3,000 words. GPT-4o acknowledges the instruction and then quietly drifts back to its default style within a few hundred tokens. For content teams with strict brand voice requirements, that's not a minor annoyance; it's re-editing work.

Claude also calls out bad assumptions. Ask it to 'create a strategy for X' and if X has a flaw in its premise, Claude will flag it before executing. GPT models more often just complete the request. Whether that's a feature or a bug depends entirely on what you need.

"Claude writes like someone who read your style guide. GPT-4o writes like someone who was told about your style guide once."

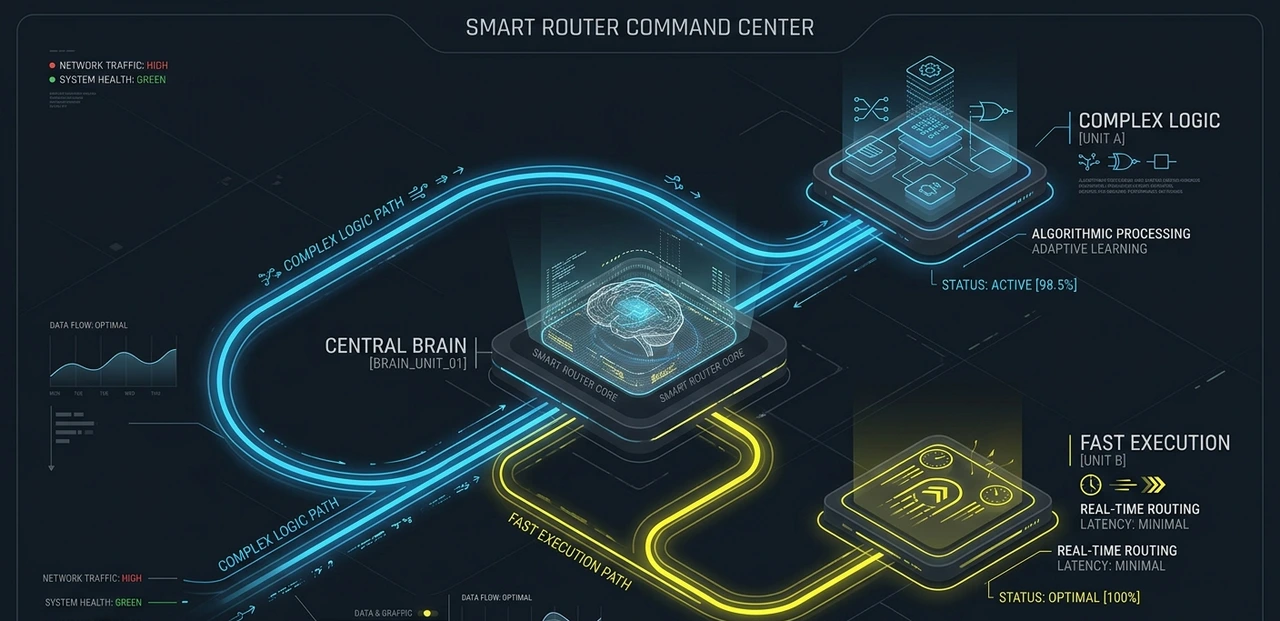

How Smart Developer Teams Are Using Both in 2026

The teams seeing the best results aren't picking a side they're routing. Claude handles tasks that need large context, complex multi-file edits, or high-quality long-form writing. GPT-4o handles quick scripts, structured data extraction, and cost-sensitive high-volume API calls.

In practice, here's what that routing looks like. Set Claude Sonnet 4.6 as the default in your editor (Cursor, VS Code, Windsurf all support it). For code review across large PRs, use Claude's batch API with prompt caching enabled; effective input cost drops dramatically for repeated codebase context. Route bulk data parsing and JSON extraction tasks to GPT-4o or GPT-4o-mini, where price-per-call is lower and structured output is more predictable.

For autonomous agent work, Claude is the stronger default. It holds context longer, follows complex system prompts more reliably, and catches conflicts in existing code rather than silently rewriting around them. GPT-4o is a better choice when the agent task is short-context and requires fast turnaround.

One specific setup worth trying in the next 48 hours: open Claude's free tier at claude.ai, paste in a file you've been meaning to refactor (up to around 50,000 words), and ask it to 'identify patterns that conflict with the existing utility functions.' That single test will tell you more than any benchmark article.

"The question isn't Claude or GPT-4o. It's which task goes to which model — and building the routing layer to make that decision automatically."

The Honest Claude vs GPT Verdict for 2026

Claude Sonnet 4.6 is the better daily-driver for most developers, specifically anyone working with large codebases, complex multi-file edits, or long-form writing. The SWE-bench gap is real. The context window advantage is real. The writing quality difference is real.

GPT-4o is not a worse model, it's a different model. It's cheaper on base pricing, faster for quick tasks, better at structured JSON extraction, and the only option if image generation is in your stack. For high-volume pipelines where cost per call matters more than context depth, it's still a strong choice.

The contrarian take that most comparison articles won't say: obsessing over which model is 'better' is the wrong frame in 2026. The developers and teams getting the best results are treating model selection as an architecture decision. They build a routing layer, use Claude as the default, and fall back to GPT-4o for tasks where its specific advantages justify the switch.

That routing system pays for itself within weeks on a mid-sized API budget. Build it. Start by testing Claude's free tier on your biggest, messiest file this week — the one you've been avoiding. See what it catches.

"Claude vs GPT isn't a loyalty contest. It's an architecture question — and the right answer is almost always 'both, routed correctly.'"

Stop reading comparisons. Run your own test — right now.

Every benchmark article, including this one, is someone else's workflow tested on someone else's code. The only data point that actually matters is how these models handle your files, your bugs, and your edge cases.

Here is exactly what to do in the next 48 hours:

- Open claude.ai on the free tier — no credit card, no API setup required. Sign up takes 90 seconds.

- Find the messiest file in your current project. Not a demo file — the one you've been quietly avoiding. A legacy module, a bloated controller, a utility file with three different authors' styles colliding.

- Paste it in and ask: "Identify patterns that conflict with each other, flag any logic that's duplicated elsewhere, and tell me what's most likely to break under load."

- Run the same prompt in ChatGPT (GPT-4o on the free tier). Compare depth, specificity, and whether either model caught something you already suspected but hadn't confirmed.

- That result — from your file — is your real benchmark. Let it inform which model becomes your default.

"One real test on your worst file is worth more than a hundred comparison articles. The model that catches your bug wins your workflow."

Frequently asked questions

For most developers, yes — especially on complex, multi-file tasks. Claude Sonnet 4.6 scores 79.6% on SWE-bench Verified vs GPT-4o's 48–54% range. In a 30-day agent study (dev.to, April 2026), Claude passed 87% of multi-file tasks vs GPT-4o's 71%. The critical difference: Claude reads existing code before writing new code. GPT-4o sometimes silently regenerates logic that already exists elsewhere, which causes hard-to-trace bugs.

Claude Sonnet 4.6 supports 1,000,000 tokens in a single pass. GPT-4o supports 128,000. In the agent study, Claude maintained reliable instruction-following past 150K tokens; GPT-4o started drifting around 100K — ignoring system prompt instructions and producing generic output. For autonomous agents where context builds across a session, this isn't a minor spec difference — it's an operational risk. That said, 95% of everyday tasks won't push either model near its limit.

GPT-4o is cheaper on headline pricing: $2.50/M input and $10/M output, vs Claude's $3.00/M and $15/M. However, Claude's prompt caching can reduce effective input costs by up to 90% for repeated context — common in editor integrations where the same codebase is sent on every request. For high-volume pipelines, model the actual cost with your specific token ratios before assuming GPT-4o is cheaper end-to-end.

Three clear areas: structured JSON extraction (91% accuracy vs Claude's 78%), speed on short tasks, and image generation. Claude has a tendency to prefix JSON output with reasoning prose, which breaks naive parsers. GPT-4o produces tighter, more predictable structured output by default. For high-volume pipelines doing data extraction from messy HTML or unstructured text, GPT-4o is the stronger choice. And if image generation is part of your product, GPT-4o is simply the only option — Claude doesn't support it.

Claude is the stronger default for agents. It holds context longer across sessions, follows complex multi-step system prompts more reliably, and catches conflicts in existing code rather than overwriting around them. GPT-4o is a better fit for short-context agent tasks that need fast turnaround and predictable structured output. The optimal setup — which teams are actually running in 2026 — is a routing layer that sends context-heavy agent tasks to Claude and short, structured tasks to GPT-4o or GPT-4o-mini.

Claude is the stronger default for agents. It holds context longer across sessions, follows complex multi-step system prompts more reliably, and catches conflicts in existing code rather than overwriting around them. GPT-4o is a better fit for short-context agent tasks that need fast turnaround and predictable structured output. The optimal setup — which teams are actually running in 2026 — is a routing layer that sends context-heavy agent tasks to Claude and short, structured tasks to GPT-4o or GPT-4o-mini.

You should use both — and the teams getting the best results in 2026 already do. Set Claude Sonnet 4.6 as your default in Cursor, VS Code, or Windsurf. Route bulk JSON parsing, quick scripts, and cost-sensitive high-volume API calls to GPT-4o or GPT-4o-mini. The routing layer pays for itself in weeks on a mid-sized API budget. Treating model selection as an architecture decision — not brand loyalty — is the practical shift that separates good AI workflows from great ones.

Sources & references

1. Coding aur Software Engineering

Claude Sonnet 4.6: SWE-bench tests ke mutabiq, yeh model software engineering tasks mein top par hai. Complex codebases ko handle karne aur bugs fix karne mein iski accuracy sab se zyada dekhi gayi hai.

GPT-5.4: Speed aur execution mein behtar hai, lekin multi-file tasks (jahan bohat saari files ek saath edit karni hon) mein Claude Sonnet 4.6 thora aage nikal jata hai.

Real-world Testing: Flutter, React aur Python backends par kiye gaye 6 mahine ke tests batate hain ke Claude mein "Context Drift" (purani baat bhool jana) GPT ke muqable kam hota hai.

2. Reasoning aur Writing Quality

Blind Human Evaluations: Insanon ne writing aur creativity ke mamle mein Claude Opus 4.6 ko sab se zyada ratings di hain. Iski likhawat zyada natural aur kam "robotic" mehsoos hoti hai.

Instruction Following: Matthew Turley ki report ke mutabiq, lambi aur pechida instructions ko samajhne mein GPT-5.4 aur Claude dono barabar hain, magar Claude zyada "logical flow" barkaraar rakhta hai.

3. Technical Benchmarks

Feature | Claude 4.6 (Sonnet/Opus) | GPT-5.4 / 4o |

JSON Accuracy | Bohat High (Complex structures mein behtar) | High (Standard APIs ke liye fit) |

Context Window | Zyada stable (Long documents ke liye) | Fast retrieval (For quick lookups) |

Coding Logic | Deep logic & debugging | Rapid prototyping & speed |

Human Score | Writing mein behtar | General knowledge mein tez |

4. Cost aur Efficiency

Pricing Matrix: 2026 ke trends ke mutabiq, tokens ki qeemat mein kafi kami aayi hai. GPT-4o ab bhi cost-effective hai, lekin productivity ke hisaab se developers Claude 4.6 ko prefer kar rahe hain kyunke isme "re-prompting" (baat bar bar samjhana) kam karni parti hai.

5. Khulasa (Verdict)

Developers ke liye: Claude Sonnet 4.6 behtareen hai kyunke yeh multi-step coding tasks behtar karta hai.

Business Operations ke liye: GPT-5.4 behtar hai kyunke iski integration aur speed daily tasks ke liye fast hai.

Content Creators ke liye: Claude Opus 4.6 apni behtareen writing quality ki wajah se pehli pasand hai.

ABOUT THE AUTHOR Danyal is a senior developer and technical content strategist with six years of hands-on experience building production systems using AI coding tools. He has shipped client projects across Flutter, React, and Python backends using both Claude and GPT models in live workflows, not controlled labs. His testing methodology focuses on what developers actually experience at the keyboard: debugging real codebases, hitting API rate limits at 2am, and watching monthly bills after a heavy sprint. He writes about AI tooling with a focus on honest trade-offs over brand loyalty. |