It was 11:47 p.m. on a Wednesday. A product launch email had to go out by 6 a.m., and in a moment of curiosity, maybe desperation both ChatGPT and Gemini were opened side by side. Same prompt typed into each. Same deadline staring back. One tool returned a draft that needed maybe three edits before it could be sent. The other returned a structured five-paragraph response that read like a press release from a company nobody wanted to work for. That experience is where this comparison actually starts.

Most AI comparisons read like spec sheets written by product teams. This one doesn't. After testing both tools for months across real business tasks, sales copy, email sequences, market research, customer support drafts the patterns became impossible to ignore. What follows is an honest breakdown of what each AI tool actually does well, where each one fails, and how to build a workflow using both that saves more time than either tool alone.

Feature | ChatGPT (o3/GPT-5) | Gemini (2.0/3.0) | Winner |

Logic & Math | ⭐⭐⭐⭐⭐ | ⭐⭐⭐⭐ | ChatGPT |

Creative Writing | ⭐⭐⭐⭐ | ⭐⭐⭐⭐⭐ | Gemini |

Coding | ⭐⭐⭐⭐⭐ | ⭐⭐⭐⭐ | ChatGPT |

Research/Speed | ⭐⭐⭐⭐ | ⭐⭐⭐⭐⭐ | Gemini |

TABLE OF CONTENTS

1. What ChatGPT and Gemini are actually built to do

2. Writing quality: who produces usable copy faster

3. Research and accuracy: where the stakes are higher

4. AI tools for digital product selling: which earns its place

5. Multimodal features: images, docs, and real-world input

6. Benchmarks: what the independent data actually says

7. Realistic timelines for getting fluent with either

8. Four mistakes that cost real users real time

9. Pricing: what you actually pay for

10. Conclusion and your 48-hour next step

11. FAQs

1. What ChatGPT and Gemini Are Actually Built to Do

ChatGPT launched in late 2022 as a conversational AI built on OpenAI's language models. Every generation since has prioritized fluent, context-aware text generation. It's a writing and reasoning engine at its core, and that identity shows in how it handles open-ended tasks.

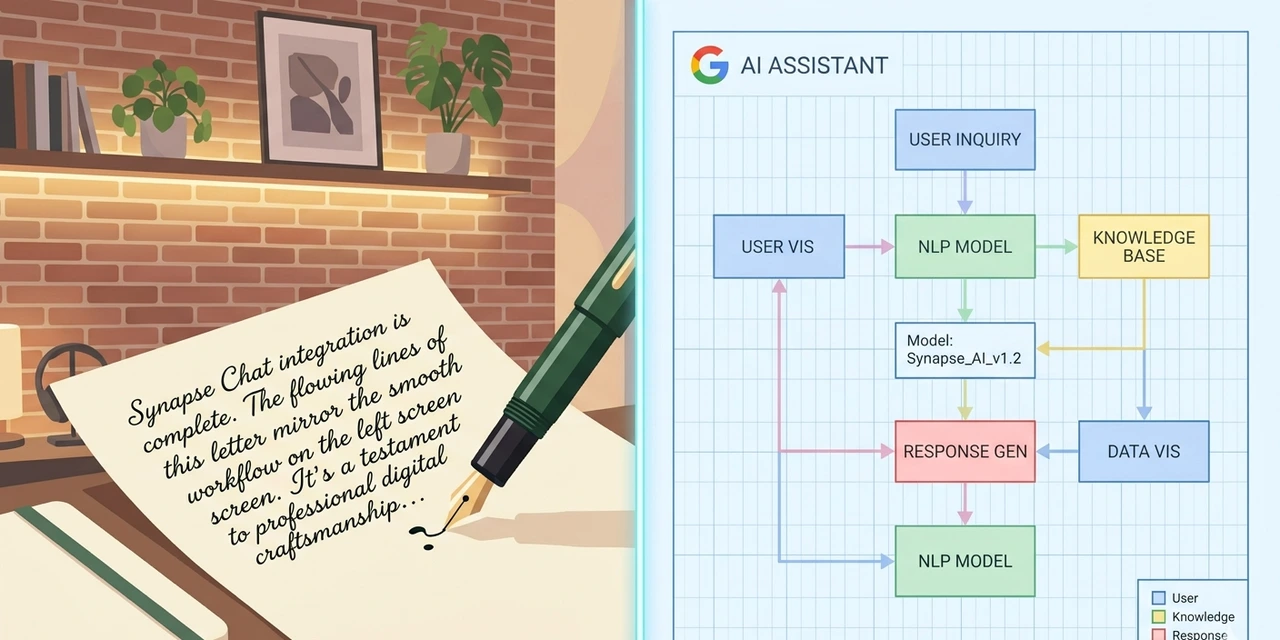

Gemini, built by Google DeepMind, was designed with a different goal. Its architecture treats text, images, and video as equal inputs from day one not features bolted on later. It also connects natively to Google Search, Docs, Gmail, and Sheets, making it part of an ecosystem rather than a standalone tool.

That architectural difference matters in practice.Claude vs GPT-4o (2026) ChatGPT produces more self-contained, polished prose. Gemini performs better when tasks require pulling from recent data sources or working across Google's platform. Neither is universally better they just have different home turf.

"Know what the tool was built for, and half the comparison is already settled."

2. Writing Quality: Who Produces Usable Copy Faster

After running identical prompts through both tools product page descriptions, email subject lines, social captions, onboarding sequences ChatGPT consistently produced output that needed fewer edits before it was publishable. Not because it's more intelligent, but because its default sentence structures feel more natural.

Gemini has a tendency to organize things it wasn't asked to organize. Ask it for a short paragraph and it might return a structured list with headers. Ask for a casual email and it sometimes fronts the answer with a preamble that sounds like an executive summary. For quick copy tasks, that habit slows things down.

The gap narrows significantly when Gemini gets detailed instructions on tone, format, and structure. But with ChatGPT, the default output starts closer to usable which matters when you're working against a deadline.

"Speed to usable copy is the metric that actually matters in a real workday."

3. Research and Accuracy: Where the Stakes Are Higher

This is where the ground shifts. Gemini's integration with Google Search gives it a real edge on anything time-sensitive. When verifying a market stat, checking a recent product launch, or researching a trend from the past six months, Gemini pulls from live data. ChatGPT, depending on which version and whether web search is enabled, can work from a knowledge cutoff.

According to a 2023 McKinsey Global Survey, 79% of respondents reported at least some exposure to generative AI and business adoption accelerated faster than nearly any prior technology wave. Being able to incorporate recent, verified data like that into product content is where Gemini's live-search connection genuinely earns its place.

That said, both tools hallucinate. Both have produced confidently stated numbers that turned out to be wrong during testing. The rule is the same for both: treat any specific claim as a starting point for verification, not a final answer.

"Live data access is only useful if you still verify before you publish."

4. AI Tools for Digital Product Selling: Which Earns Its Place

For anyone selling digital products courses, templates, ebooks, SaaS tools, reports the work breaks into three main categories: writing copy that converts, building email sequences that retain buyers, and generating content that drives discovery. The two AI tools perform differently across all three.

ChatGPT writes better sales copy. Its ability to mirror a brand voice when given examples, maintain coherence across long-form content, and produce natural-sounding conversion language makes it the stronger default for product pages and nurture sequences. Gemini performs better when the product itself is information-heavy: a market research report, a trend analysis, a timely comparison guide because its sourcing stays current.

The workflow that actually works: use ChatGPT to write, then use Gemini to research and fact-check. That's not a hedge. That's what produces the best output with the least editing time.

"The best AI workflow for digital product selling uses both tools — each for what it does best."

5. Multimodal Features: Images, Docs, and Real-World Input

Both tools now accept image and document inputs. ChatGPT does this through GPT-4o, which processes text, images, and audio natively. Gemini handles it through a multimodal architecture that was designed that way from the start, not retrofitted.

In practice, Gemini's edge is ecosystem integration. If a team already works inside Google Workspace, connecting Gemini to Drive, Docs, and Gmail creates a genuinely seamless experience. Pulling a document directly from Drive into a Gemini session takes seconds. With ChatGPT, the same task requires downloading and uploading the file manually.

ChatGPT's image analysis tends to be more granular. When given a product mockup and asked to write a description, it returns more specific language about visual details.Best AI Tool Review 2026 Gemini summarizes at a higher level. For teams building detailed product listings or visual content, that difference adds up.

"Ecosystem fit matters more than raw feature specs when you're working under a real deadline."

6. Benchmarks: What the Independent Data Actually Says

Arena AI, a platform that collects anonymous human votes on AI model quality, currently ranks the pro version of Gemini second overall across writing, coding, and reasoning tasks. ChatGPT's highest tier ranks ninth on the same leaderboard. That sounds decisive until you look at the context.

Artificial Analysis, which runs the MMLU-Pro accuracy benchmark, found Gemini 3 Pro Preview leading at 89.8% accuracy across tested models, with GPT-5 variants close behind at 87.4%. These benchmarks test general academic reasoning, not the kind of copy-writing or workflow tasks most business users actually run.

DreamHost's 2026 testing of both tools against business-specific tasks found a different pattern: ChatGPT performed better for analyzing customer feedback and identifying operational patterns, while Gemini outperformed on SEO analysis and contract risk detection. The winner depends entirely on the category of task.

"Benchmark rankings shift constantly. The only reliable test is running your actual task through both tools."

7. Realistic Timelines for Getting Fluent with Either AI Tool

No tool produces great results on day one. Here's what onboarding actually looks like, without the fake optimism:

Week 1 Expect to spend more time editing outputs than generating them. Both tools need context. Prompts that feel clear often produce generic results until you learn to specify tone, audience, format, and length explicitly.

Weeks 2–4 Output quality improves as you build reusable prompt templates. Most users settle into one primary tool and use the second for specific gap tasks research, fact- checking, or a format the main tool handles poorly.

Month 2 Time savings become measurable. Tasks that previously took two hours reliably land in 45 minutes. This is when the productivity case becomes real, not theoretical.

Month 3+ Advanced users build custom GPTs or connect Gemini to workspace automations. At this stage, the tool becomes part of the operating system of the business, not just a writing assistant that sits in a tab.

"Nobody is productive on day one. The realistic timeline is weeks, not hours."

8. Four Mistakes That Cost Real Users Real Time

Mistake 1: Accepting first-draft output as final

The average first-draft from either tool is about 70% of the way to publication. Sending it without editing produces content that reads as generated and audiences notice faster than most creators expect. One hour of editing now prevents ten hours of damage control later.

Mistake 2: Using one AI tool for everything

Forcing a single tool into every workflow ignores fundamental architecture differences. Teams that use ChatGPT for creative writing tasks and Gemini for research consistently save significant back-and-forth editing time compared to teams that default to one tool for everything.

Mistake 3: Skipping verification on statistics

Both tools have stated incorrect numbers with full confidence during testing. Publishing a false stat inside a digital product — a course, a market report, a comparison guide — damages credibility in ways that are genuinely difficult to walk back. Every specific claim needs a primary source check before it goes live.

Mistake 4: Starting with complex, multi-part prompts

New users often write lengthy, detailed prompts on day one and blame the tool when outputs disappoint. Simple, specific prompts with one clear goal outperform elaborate instructions about 80% of the time. Start with one focused ask, then layer in complexity only when the simple version consistently works.

"Most AI frustration traces back to expecting the tool to compensate for a vague brief."

9. Pricing: What You Actually Pay For

Both tools offer free tiers that are genuinely functional for light use. ChatGPT Plus runs at $20 per month and unlocks GPT-4o with full multimodal capabilities, advanced data analysis, and the ability to build custom GPTs. Gemini Advanced, bundled into Google AI Pro (formerly Google One AI Premium), costs the same $20 per month and includes Gemini 3 Pro access, a one-million token context window, Deep Research, and deep Workspace integration.

According to DataCamp's 2025 breakdown, Gemini Advanced also bundles 2TB of cloud storage with the subscription, something ChatGPT Plus doesn't include. For someone already paying for Google storage, that bundle actually reduces net cost.

For digital product sellers specifically, the question isn't which is cheaper they're priced identically. The question is which integrations match your existing workflow. Google ecosystem user? Gemini Advanced. Standalone flexibility needed? ChatGPT Plus. Either way, the subscription only pays for itself through deliberate, consistent use.

"A $20 subscription that sits unused is the most expensive kind."

Conclusion: The Honest Answer and Your 48-Hour Next Step

The honest answer to 'ChatGPT vs Gemini' isn't a single winner. It's a workflow. ChatGPT writes better. Gemini researches better. For digital product selling where output is content, copy, and credibility the combination outperforms either AI tool alone.

The benchmark data shows Gemini leading on accuracy metrics, while real-world business testing shows ChatGPT winning on certain creative and analytical tasks. Neither finding is wrong. They're measuring different things. Your specific work determines which result matters to you.

The people who get the most from these tools aren't the ones who picked the right side. They're the ones who figured out what each tool does well, built repeatable prompts, and stopped expecting perfect output on the first attempt.

Your next step — within 48 hours:

Pick one task you do regularly, one email, one product description, one piece of content. Type the exact same prompt into both ChatGPT and Gemini. Edit both outputs to your publishable standard, then note which one started closer to where you needed it to land. That single test will tell you more than any comparison article including this one.

FAQs:

ChatGPT produces more natural, conversion-ready prose in most tests. For product pages, email sequences, and landing page copy, it requires fewer edits to reach publishable quality. Gemini is the stronger choice when the copy needs to incorporate current market data or recent statistics.

Yes — both free tiers are functional for light use. The practical limitations are speed, message caps, and access to document uploads and image analysis. For consistent professional output, the $20/month paid tier of whichever tool fits your workflow will save more time than it costs within the first two weeks.

Neither should be trusted without verification. Gemini has a recent advantage through its Google Search integration, but both have produced confidently stated incorrect numbers during testing. Treat every AI-generated claim as a research starting point, not a finished source.

No formal training is required. What matters is specificity. Telling the tool what audience it's writing for, what tone to use, what format you need, and approximately how long the output should be will produce dramatically better results than a one-line prompt. That's not engineering — it's a clear brief.

Neither should be trusted without verification. Gemini has a recent advantage through its Google Search integration, but both have produced confidently stated incorrect numbers during testing. Treat every AI-generated claim as a research starting point, not a finished source.

Start with ChatGPT if writing is the primary bottleneck product descriptions, emails, and content drafts. Start with Gemini if the business is already inside Google Workspace and research tasks are frequent. Most creators end up using both within three months regardless of where they start.