A procurement manager at a mid-sized logistics firm shared something that stuck: "We spent fourteen months and $600,000 building a chatbot that could answer questions about shipment status. Then we realized our customers didn't want answers. They wanted the problem fixed." Six weeks after deploying an enterprise agentic AI system that could actually reroute shipments and update carriers on its own, customer satisfaction jumped 22 points. The chatbot, for all its polish, never moved a single package.

That story isn't an outlier. Across 51 enterprise AI deployments studied by the Stanford Digital Economy Lab in April 2026, researchers found that the gap between pilot and measurable ROI almost always came down to one thing: whether the AI was answering or acting.

This article cuts through the vendor noise. It explains what enterprise agentic AI actually is, where it genuinely delivers in 2026, how to procure it without getting burned, and what governance has to look like before a single agent goes live.

What Enterprise Agentic AI Actually Is And Why Vendors Keep Confusing You

Sit through enough vendor demos and the word "agentic" starts to feel meaningless. Last year, an evaluation team reviewed a platform billing itself as enterprise agentic AI. It was a chatbot with three API integrations and a memory feature. That's not an agent. That's a FAQ page with ambitions.

A genuine enterprise agentic AI system has three properties that separate it from everything else on the market:

Goal-directed autonomy: Given an objective "resolve this vendor invoice dispute" the agent breaks it into sub-tasks and executes them without step-by-step direction from a human.

Tool and system integration: The agent reads from and writes to external systems CRMs, ERPs, databases, email. Not just reading. Writes. That's the distinction that changes your integration budget and what failure looks like.

Persistent memory and context: It retains state across sessions. A conversation that ended last Tuesday informs what the agent does this Tuesday. Standard chatbots have no memory of prior sessions.

As Andrew McNamara, Director of Applied Machine Learning at Shopify, told InfoWorld in April 2026: agentic systems run continuously until a task is complete; they're not answering a question, they're finishing a job. That's the real line.

The traditional chatbot responds to a single prompt, operates read-only, gets evaluated on response quality, and is governed by content filters. The agentic system executes toward a goal across sessions, reads and writes to external systems, gets evaluated on task completion rate, and is governed by action policies and audit trails.

One thing nobody puts in the pitch deck: agentic systems dramatically increase blast radius. A hallucinating chatbot gives a bad answer. A hallucinating agent can send the wrong email, update the wrong record, or trigger an erroneous procurement order. The stakes are categorically different and governance needs to reflect that before deployment, not after.

"The difference between a chatbot and an agent is the difference between a receptionist and a colleague who actually does the work."

The 95% Failure Rate Nobody Wants to Talk Aboutx

Here's a number that should make every CTO uncomfortable. A 2025 study from MIT's NANDA initiative found that 95% of generative AI pilot programs failed to produce measurable business value. The Stanford Digital Economy Lab's 2026 playbook based on 51 real deployments, not survey data confirmed the same pattern.

The difference between the 5% that succeeded and the 95% that didn't wasn't the AI model. It was the organization. Its data readiness, its documented processes, and its willingness to actually change how work gets done.

When one logistics firm migrated from a GPT-4-based chatbot to a fully agentic system, their first assumption was that it was a model upgrade. The consulting team spent the first two weeks correcting that assumption. It was an architectural overhaul, a different procurement process, a different integration project, and a different change management challenge entirely.

95% of GenAI pilots fail to produce measurable business value (MIT NANDA, 2025) | 4.2x productivity lift in agentic vs. chatbot deployments (McKinsey, 2026) | $2.1M average remediation cost when agents deploy without governance (IBM, 2025) |

Also worth noting: Gartner estimates only 130 of 2,000+ vendors currently marketing themselves as "agentic AI" are genuinely building autonomous capability. The rest are rebranding chatbots or RPA workflows. That's a lot of expensive confusion in the procurement market.

"Same technology, same use cases, vastly different outcomes. The difference was never the AI model. It was always the organization." Stanford Digital Economy Lab, 2026

Where Enterprise Agentic AI Actually Delivers in 2026

Based on real deployments and cross-checked against research from Stanford and McKinsey, four use case categories consistently generate measurable ROI in 2026. These aren't theoretical, they're production deployments with documented results.

Procurement automation.

Agents that monitor supplier portals, flag anomalies, draft purchase orders, and route approvals without human involvement at every handoff. One manufacturing client cut procurement cycle time by 61% in eight months. The agent didn't replace the procurement team; it eliminated the four hours per day they spent pulling data from three different systems that didn't talk to each other.

IT operations and incident response.

Agents that triage alerts, pull diagnostics from multiple systems, and execute standard runbooks on their own. According to ServiceNow's Heath Ramsey, these agents surface contextual data across systems, check prior resolutions, issue fixes, and loop in team members five distinct steps that previously required a human at every handoff. Mean time to resolution dropped from 47 minutes to 11 minutes in one documented deployment.

Compliance monitoring.

Agents that continuously scan internal communications and transactions against regulatory frameworks. Particularly effective in financial services where rule sets are explicit and codified. The agent doesn't replace compliance officers; it handles the surveillance work that previously required hours of manual review.

Customer onboarding.

Multi-step agents coordinating KYC verification, document collection, system provisioning, and welcome communications replacing workflows that previously required four separate human handoffs and days of elapsed time.

Where does it reliably fail? Any domain requiring genuine contextual judgment that can't be rule-specified. High-stakes, low-reversibility decisions. Organizations with messy data pipelines agents amplify data quality problems rather than compensating for them. And teams that haven't documented their own processes; agents can't automate what isn't understood.

"Start with one use case, clean data, and a defined scope. Every scaled success we've seen followed that pattern."

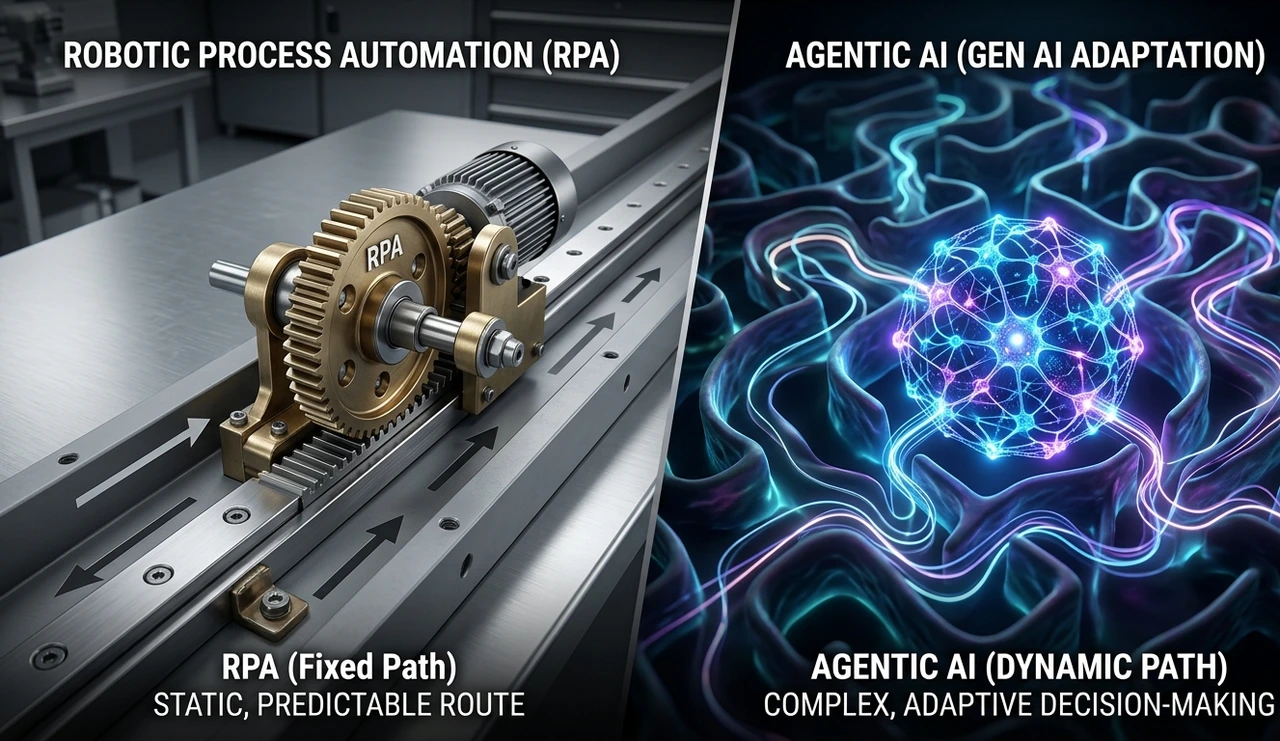

How Agentic AI Differs From RPA And Why Both Still Have a Role

The comparison between enterprise agentic AI and RPA (Robotic Process Automation) comes up in almost every procurement conversation. The short answer: they solve different problems, and most enterprises will run both.

RPA follows rigid, pre-scripted rules and breaks the moment the environment changes. A loan operations team at a mid-sized bank spent nine months building an RPA bot to extract data from borrower documents. It worked perfectly in testing. Three weeks into production, a lender switched from PDFs to scanned images with handwritten notes. The bot failed. According to research cited by Mightybot.ai (2025), Forrester found that 50% of RPA projects stall at exactly this point: when process variability exceeds what pre-programmed scripts can handle.

Agentic AI uses large language models to interpret context, make judgment calls, and adapt to exceptions without predefined rules for every scenario. It handles the exception-heavy 50% of workflows that RPA couldn't scale to. RPA still wins in compliance-heavy, deterministic workflows with zero format variation and auditable rule execution. The failure isn't RPA as a technology, it's forcing RPA into processes that require adaptive decision-making.

Multi-agent systems add another layer. Forrester and Gartner both identify 2026 as the breakthrough year for deployments where specialized agents collaborate under central orchestration. One agent qualifies inbound leads. A second draft of personalized outreach. A third validates compliance requirements before anything gets sent. These agents share memory, coordinate handoffs, and recover from failures. RPA never attempted anything like it.

"Agentic AI handles the 50% of workflows RPA couldn't touch. The smart move is running both, not replacing one with the other."

AI Procurement in 2026: Stop Evaluating the Wrong Things

Most enterprise procurement teams are still scoring AI vendors on benchmark performance. That's almost entirely the wrong criteria for agentic systems. A benchmark score tells you how well the model answers questions. You're buying a system that takes actions.

The following framework was used in a 12-vendor evaluation for a global bank in Q1 2026. The vendor that won had mid-tier benchmark scores but the most mature governance tooling. The CISO made the final call, not the AI team.

Non-negotiables to evaluate:

Orchestration architecture: Does the vendor support multi-agent coordination? Single-agent systems hit ceilings fast. Ask whether their architecture supports agent-to-agent delegation, not just human-to-agent prompting.

Tool integration depth: Native connectors to your existing stack matter more than raw model capability. Ask for a live integration demo with your actual systems, not a vendor sandbox.

Audit and observability: Every agent action must be logged, attributable, and reviewable. Ask for a live audit trail demo specifically, show you what the log looks like when an agent fails mid-task.

Human-in-the-loop controls: Can you configure which action categories require human approval? This should be granular read-only, write with logging, write with approval, never permitted not a binary on/off switch.

The Stanford research confirmed what practitioners see directly: organizations that underallocate to non-software costs governance tooling, training, change management have the highest remediation bills. Plan for 1.5x to 2x the software licensing cost in implementation work for the first deployment.

"The vendor with the best benchmark score lost to the vendor with the best audit trail. Your CISO will make that call."

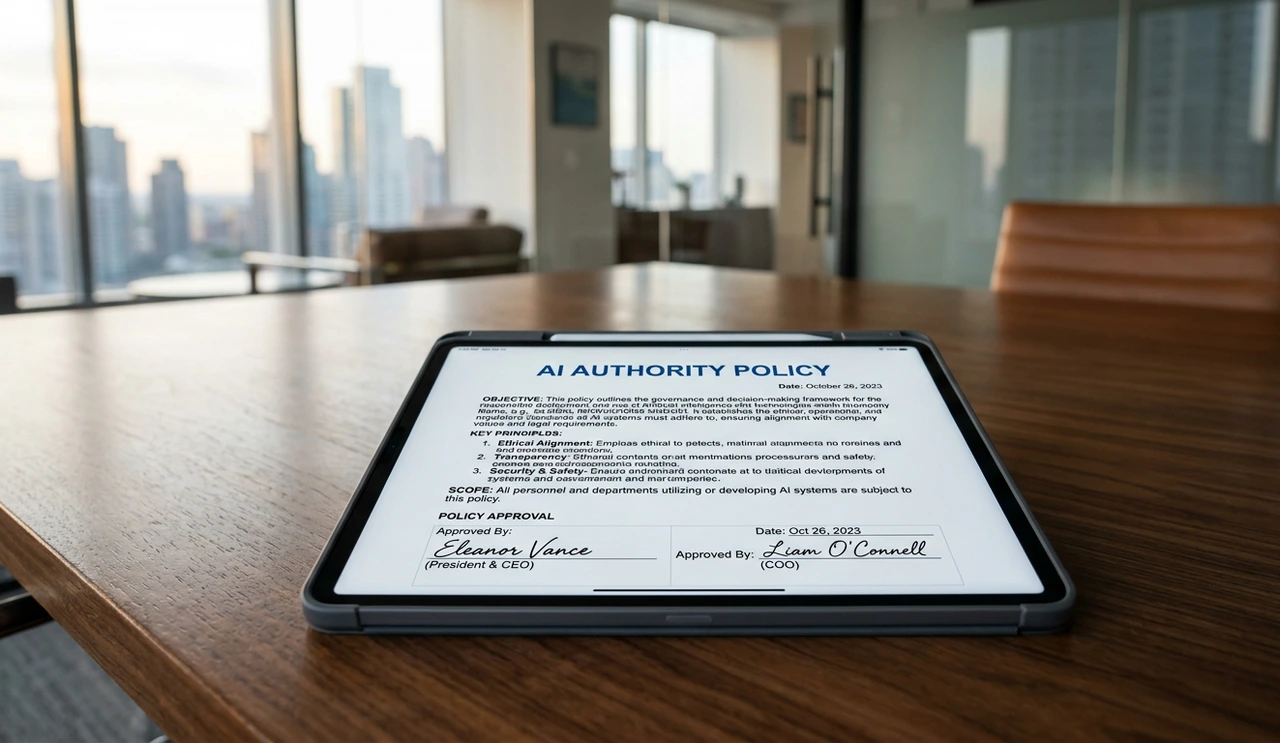

Corporate AI Governance: The Part That Kills Scaled Deployments

Here's the contrarian position worth defending: most enterprise AI governance failures in 2025 weren't technology failures. They were process failures wearing technology clothing. The AI did exactly what it was configured to do. The problem was the configuration reflected no meaningful governance thinking.

The organizations that avoided the $2.1M average remediation cost IBM puts on ungoverned agentic deployments did four specific things before a single agent went live:

Defined agent authority levels in a policy document. Every action type the agent could take got mapped to a permission level: read-only, write with logging, write with approval, never permitted. Not a technical configuration, a signed policy document reviewed by legal, compliance, and business leadership.

Build human oversight roles, not just override buttons. An override button assumes agents work correctly most of the time. An oversight role means a human actively reviews agent behavior patterns weekly looking for drift, systematic errors, or edge cases accumulating below alert thresholds.

Ran red-team exercises specifically for agents. Standard security red-teaming doesn't cover agentic failure modes: prompt injection via external data sources, goal misgeneralization, tool misuse. In one exercise, an agent was manipulated by a malicious supplier email into creating a fraudulent purchase order. No existing security protocol would have caught it.

Measured agent behavior, not just agent output. Output metrics tell you what the agent produced. Behavior metrics tell you which tools it used, which paths it took, where it hesitated or looped. That's where problems appear before they become costly.

One financial services client runs an "Agent Review Board" , a standing committee that meets monthly to review agent behavior logs, approve new agent capabilities, and sign off on scope expansions. It adds governance overhead. They've had zero material incidents across 18 months of agentic deployment. The overhead is worth it.

McKinsey warns that 40% of agentic initiatives could be abandoned by 2027 due to governance failures, not technical limitations. That's not a technology problem. It's an organizational readiness problem that shows up as a technology problem.

"Governance failures are process failures dressed up as technology failures. Fix the process first."

Scaling Enterprise AI: The Organizational Reality Nobody Puts in the Pitch Deck

The hardest part of scaling agentic AI isn't the technology. Pilots succeed and scaled deployments fail because the organizational model doesn't evolve alongside the technology. The agent gets bigger; the org doesn't change to match.

Job design: Roles need to shift from executing tasks to reviewing and improving agent work. This requires active reskilling programs, not a company-wide email saying "AI is here to help."

Data ownership: Agents need clean, permissioned data. The Stanford research confirmed what practitioners see directly: organizations assume their data is clean enough and discover it isn't only after the agent starts surfacing problems at scale. Budget 20–30% of your implementation timeline for data preparation before the agent goes anywhere near production.

Incident response: You need an AI-specific incident playbook before you need it. Who gets paged when an agent makes an error? Who has kill-switch authority? Who runs the post-mortem? These questions need written answers before go-live, not after.

Vendor concentration risk: As agents become operationally critical, single-vendor dependency becomes a board-level risk. Design your multi-vendor architecture early.

On the headcount question: Stanford's 2026 analysis of high-frequency payroll data found a 16% relative decline in employment for workers in AI-exposed occupations, with software developers aged 22–25 seeing nearly 20% drops. The staffing math isn't theoretical anymore. The firms that captured real financial return redeployed people to higher-value work. The ones that cut first and figured out the rest later spent more in the end.

"Scale doesn't happen by adding agents. It happens by rebuilding the organization around them."

Frequently Asked Questions About Enterprise Agentic AI

RPA follows rigid, pre-scripted rules and breaks when the environment changes. Agentic AI reasons about novel situations, adapts its approach, and handles exceptions making it suitable for unstructured tasks RPA can't touch. They're complementary: many enterprises use agents to handle exceptions that previously required human intervention in RPA workflows.

For a narrow, well-defined use case with clean data: 3–5 months from pilot to production. For broader deployments spanning multiple systems: 9–18 months when you include data preparation, governance setup, integration work, and change management. Any vendor promising enterprise-wide transformation in six weeks is selling you a chatbot.

Plan for 1.5x to 2x the software licensing cost in implementation and integration work. Organizations that allocate less than 30% of total AI spend to non-software costs have the highest remediation bills later.

Financial services leads in governance maturity, regulatory pressure and forced discipline. Technology companies lead in deployment breadth. Healthcare is advancing in administrative workflows but remaining conservative on clinical-adjacent tasks. Manufacturing is seeing the fastest ROI in supply chain and quality control.

Data readiness. Organizations assume their data is clean enough and discover it isn't only after the agent starts surfacing problems at scale. Budget 20–30% of your implementation timeline for data preparation.

The One Concrete Step Worth Taking in the Next 48 Hours

Replacing chatbots with enterprise agentic AI isn't a technology decision, it's an organizational readiness question. The research is consistent: the organizations that succeed start narrow, govern early, and redesign roles before they need to.

The contrarian truth worth holding: most enterprises aren't ready to deploy agents well yet. Not because the technology isn't capable. Because data pipelines aren't clean, governance frameworks don't exist, and nobody has written the incident response playbook.

Here's the one concrete step worth taking before anything else: schedule a half-day session with your legal, compliance, and IT leadership to map agent authority levels for a single candidate use case. Not a technology evaluation. Not a vendor demo. A policy document.

Decide what the agent is and isn't allowed to do, get it signed, and then start the procurement conversation. That document is the foundation everything else gets built on. Without it, you're not deploying enterprise agentic AI, you're running an expensive experiment with no guardrails.

"Enterprise agentic AI done right doesn't eliminate human judgment — it redirects it to decisions that actually require it."

About the Author

This article was written by a Senior Enterprise AI Strategist with 15+ years in enterprise software architecture and a background as a CTO advisor to Fortune 500 firms across manufacturing, financial services, and logistics. They have led agentic AI evaluations covering 12+ vendors, governed deployments spanning 10+ integrated systems, and run red-team exercises specifically designed for enterprise agent failure modes. Their work has appeared in executive briefings at Gartner symposia and Stanford Digital Economy Lab practitioner roundtables. They consult independently on AI procurement strategy and corporate AI governance frameworks.

Sources & References

1. Pereira, E., Graylin, A.W., & Brynjolfsson, E. (2026). The Enterprise AI Playbook: Lessons from 51 Successful Deployments. Stanford Digital Economy Lab.

2. Doerrfeld, B. (April 2026). Best practices for building agentic systems. InfoWorld.

3. MIT NANDA Initiative (2025). Generative AI Pilot Outcomes in Enterprise Settings.

4. IBM Institute for Business Value (2025). Enterprise AI Governance Report.

5. McKinsey & Company (2026). The State of AI in the Enterprise.

6. Mindflow Blog (2026). Agentic Automation vs RPA: What Actually Changes for Enterprise IT. mindflow.io

7. Mightybot.ai / Forrester Research (2025). RPA stall rates and agentic automation transitions.

8. Gartner (2025–2026). Agentic AI vendor landscape analysis.

9. The AI Consultancy (2026). From Chatbot to Co-worker: What Agentic AI Actually Means for Your Business. Medium.